Avoiding the Gray Areas

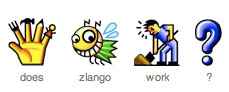

I just caught the last of Shawn Henry’s SXSW panel. Key takeway- there are white areas of things that are good to do for accessibility and black areas of things that are bad for accessibility- avoid worrying about the gray area in the middle. She mentioned the ability of web accessibility experts to endlessly debate the ins and outs of alt text. For example:

- Should alt text be used to paint a thousand words?

- Mini-FAQ about the alternate text of images

- Alt Text, Less Can be More

- “Alt Text” search on WebAIM Discussion List

- Search fo “alt text” on The Web Accessibility Initiative Interest Group (WAI IG) mailing list

These discussions are helpful and essential for establishing best practices. However, these discussions are harmful to the extent that a developer becomes tied up arguing about “gray areas” instead of building accessible content.